As the decade progresses, it seems that the march to the cloud for enterprise has intensified. In the cloud ecosystem, several new cloud service providers or hyperscalers have emerged, not only the handful that are most familiar,

but others have emerged as well to challenge the market share of the big three:

|  |  |  |

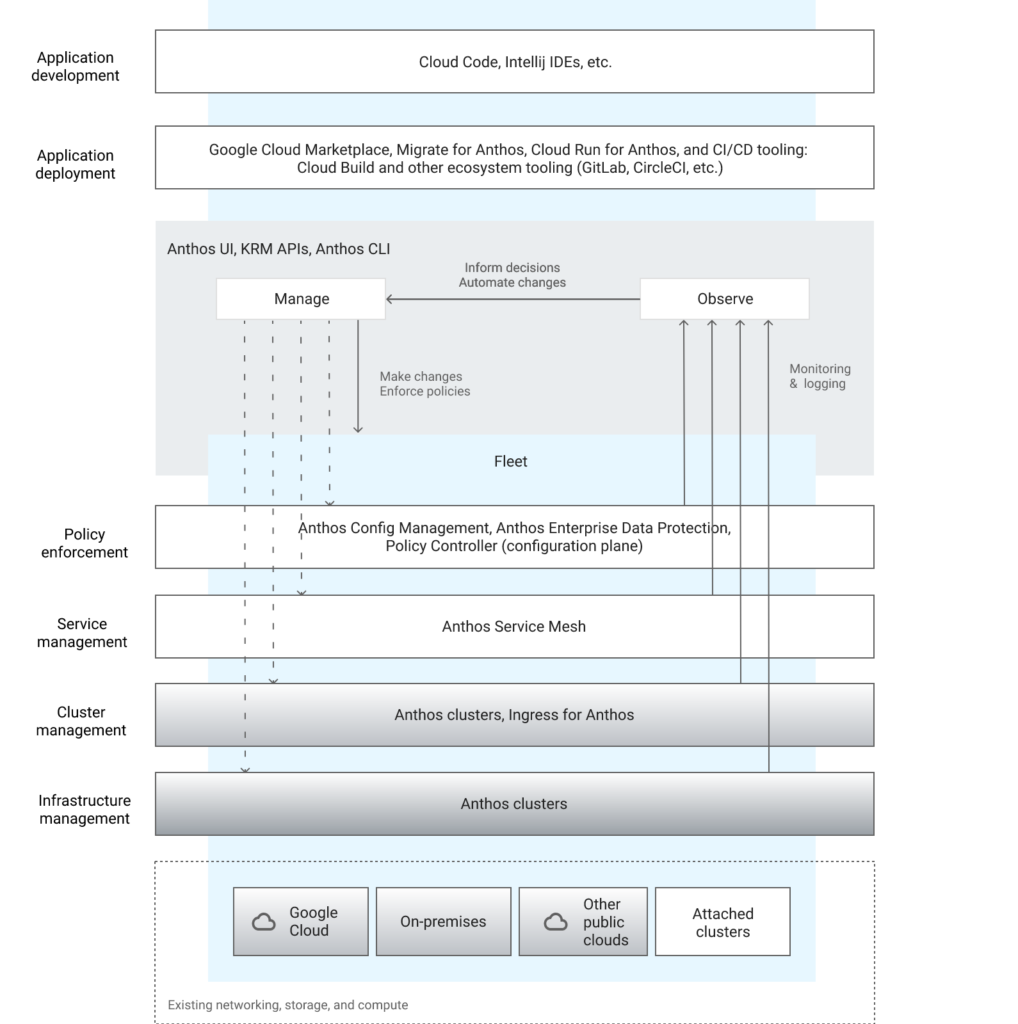

Google has a strong hyperscaler cloud offering in Google Cloud (GCP) whose features and price points are positioning themselves well in many enterprise plans for cloud expansion this decade. Companies utilizing GCP in their expansion plans many times have internal products and product components that bridge more than one technology stack. Microsoft’s .NET technology, now migrated to open source, is a popular technology stack in which many companies have significant on-premise investment. In cloud migration plans, of course Microsoft would prefer the migration to Azure from on-premise, and has quite a significant set of offerings to help that along. However, companies more and more are considering and moving to using .NET on other hyperscalers to include AWS and GCP due to the simplification factor that can be realized by unifying service vendors, or other constraints such as personnel and application dependency. GCPs trajectory in the marketplace has been to unify and increase enterprise support with offerings such as Anthos for a common containerized platform and Apigee for driving towards API driven product development. So while they have a small share of the overall pie, it is increasing every year and scenarios to run all technology stacks across multiple hyperscalers are becoming more and more the norm rather than the exception.

Motivation

It is helpful to start out considering motivation for this path. There are many potential motivating factors, but the most compelling one seems to be the power of choice. Technology stacks that are useful across multiple hyperscalers are more valuable than technology that only works on one hyperscaler, despite the popular trends in marketing. This is of course a broad generalization, and different factors come into play as you drill down into the various technologies. With choice, however, comes the freedom to move unconstrained, and to respond to business environment challenges, like acquiring a company on a different tech stack, or choosing for interdependency reasons, or simply purchasing for a perceived value without vendor lock-in. All of these factors can be involved in an overall decision. Many times people in builder roles have little to no influence on vendor selection or lock-in. In those roles it is even more helpful to navigate cross hyperscaler with technology stacks.

From my perspective there are a lot of reasons to pursue this path. Some or all of them may or may not work out with specific scenarios and situations, but from a true open-source perspective freedom of choice is hard to argue with.

Approach

The approach to .NET technology stacks on GCP can be considered with a standard cloud-migration toolset. Re-host, Re-platform, and Re-architect are three primary key approaches to application migration and modernization. These approaches and general buckets are similar to other technology stacks and other hyperscalers, so there is nothing unique there. Re-hosting is also referred to as “lift-and-shift” in common terms, as the move from a datacenter to the cloud on the same configuration and operating systems for machines is performed. Re-platform is sometimes also referred to as “containerization”, referring to the work in preparing an application for the move from an on-premise environment to a hosted application service on a hyperscaler. Re-architecting refers to efforts to deconstructing and reconstructing applications and services on hyperscaler environments, many times combined with a microservices approach.

Open Source

It is important to note that the vast majority of hyperscalers are built on the backbone of open source operating systems, mostly Linux versions. The reason for this is that as scale gets larger and larger and virtualized machines get smaller and smaller, managing licensing at an O/S level becomes vastly more complex. This brings to light two general truths:

- Microsoft .NET stack on Linux is crucial – .NET Core and forward paths are key

- Containerization to a k8 – Kubernetes platform of some kind is critical path

These come into play more and more progressing from Re-hosting approaches to Re-platform and Re-architect. The good news is that the same patterns mentioned here will apply equally to many other hyperscalers in principle.

Re-host

GCP’s offers Compute Engine – a service for hosting virtualized machines in the cloud. Creating a new VM instance in Compute Engine offers two direct Windows offerings:

- Windows Server – with past 3 release options – Datacenter or Datacenter Core

- SqlServer on Windows Server – past 3 release options of Server Datacenter + SQL Server license

Both of these options are offered at a per-second charge. As an experiment for a development environment, I allocated 2 VM’s on a personal account for development, with each of the above options. I installed SQL Server on one and Visual Studio, and Visual Studio on the other. On each one, I am very frugal with development time, not spending more than an hour at a time, then I shut down the VM instance. On a trial account used, it is pretty amazing how much you can get done on the initial $300 allocation given for signing up.

The other approach than a per-minute paid for OS license is that you can bring your own Windows Server licensing from Microsoft, and there are various tools to assist with that endeavor including Software Assurance.

Re-hosting can be the most expensive option, but it offers the greatest freedom with application migration moving things exactly as they currently are to the cloud. Microsoft technology stack applications can be moved straight from Windows Server OS in datacenter to replica of the machine in Compute Engine. If re-hosting is your approach, GCP Compute Engine Committed-use discounts can offer attractive cost savings for a 1-3 year hardware lease commitment that way too many overlook. With this option comes running .NET on Windows Server on GCP, which is the same as that stack on other hyperscalers or datacenter environments.

Re-platform

Re-platform can offer economies of scale and commonality with building a service mesh platform on hyperscalers that allow for a common target platform for large numbers of applications. Re-platform offers a tremendous amount of freedom also between datacenters and hyperscalers, as Kubernetes service mesh offerings are readily available on datacenter platforms such as Tanzu as well as hyperconverged infrastructure (HCI) offerings from hyperscalers such as Azure Stack, AWS Outpost, or Google Anthos.

Re-architect

Many critical path applications are heavily depended on over time, and tend to morph into monoliths. Migrating a monolith to the cloud offers challenges of complexity and inter-dependency as well as scale. Two primary paths have emerged in the re-architecting trend, API-driven development and microservices.

API-driven development is an approach taken with a monolith of separating out critical path features and offerings of a monolith and splitting them out first to a service mesh layer consisting of planned out API middle-tier and data back-end tier offerings, and then connecting them to one or more front-ends for user interaction. GCP’s offering of Apigee, a cloud agnostic API management service has an outline of using this approach to modernizing a monolith into microservices – visible at https://cloud.google.com/apigee

Microservices is an approach highlighted by the overall concept of upgrading the architecture of a monolith application along with migrating it to a hyperscaler. A microservice architecture will also take advantage of native offerings by hyperscalers, such as an automated function or step function offering, infrastructure-as-a-service, and connection to many other service offerings directly and through cloud SDK’s. GCP offers connection to its cloud services through a SDK – .NET Cloud Client libraries – https://cloud.google.com/dotnet/docs/reference.

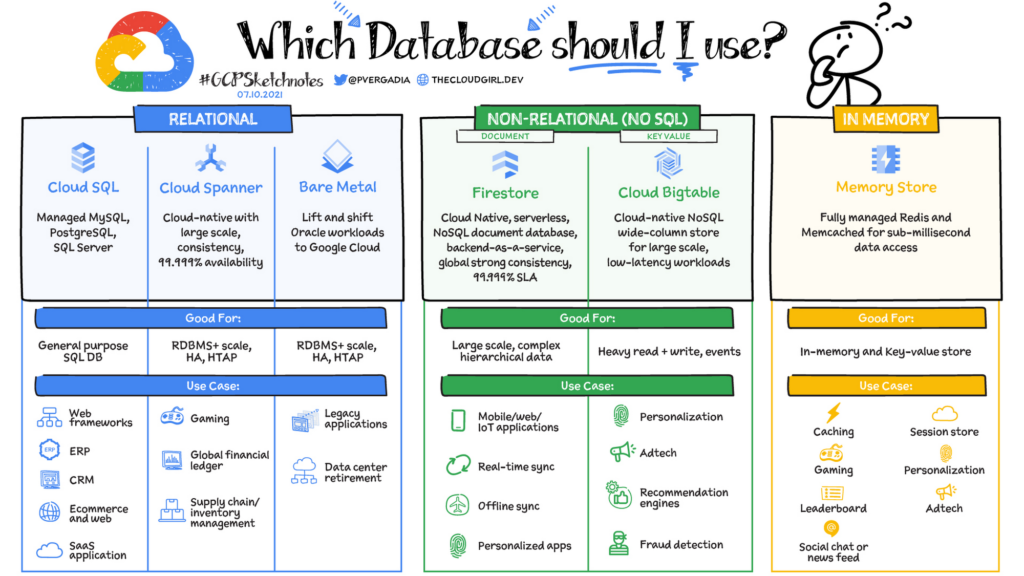

Data Layer – GCP offers https://cloud.google.com/sql-server – which have options to include Cloud SQL for SQL Server as a managed service. There is also a Database Migration API which offers a managed service for migrating data to Cloud SQL. For a great more complete picture of GCP database options, shout out to Priya Vergadia and the image below and great blog article – https://cloud.google.com/blog/topics/developers-practitioners/your-google-cloud-database-options-explained

The database options above offer SDK’s and examples for how to connect to the database using C# Cloud Client libraries. Here is a link to BigTable example – https://cloud.google.com/bigtable/docs/samples-c-sharp-hello

In addition to database options, query through BigQuery offers access into data warehouses and analytics.

All in all, re-architecting solutions is a viable path with clear options outlined for .NET technologies on GCP.

Conclusion

Deploying .NET workloads on Google Cloud is viable and accomplishable with the correct key focus. Re-host on any operating system at a per-second charge is a quick solution. Re-platform building Anthos service mesh layers on Kubernetes on GKS is a valuable path towards hybrid multi-cloud environments. Re-architect to build purposeful API service mesh layers with Apigee and Google Functions and other services to produce live cloud environments valuable to business.

I personally am excited about Microsoft’s .NET technology traversing its journey to a fully open-source technology stack. I am also excited about pathways towards seeing .NET technology stacks deployed on Google Cloud as well as all of the world’s leading hyperscalers that are necessary towards realizing the full value of open-source efforts.